A business created to make computer game graphics more beautiful stumbled into driving AI, one of the most important technologies of the 21st century. Rathbone Greenbank Global Sustainability Fund manager David Harrison explains what all the fuss is about.

Nvidia: from pastime to new paradigm

Last week was all about Nvidia. This American company, which we own, used to be known only by computer geeks and gamers, now it’s a household name at the forefront of AI.

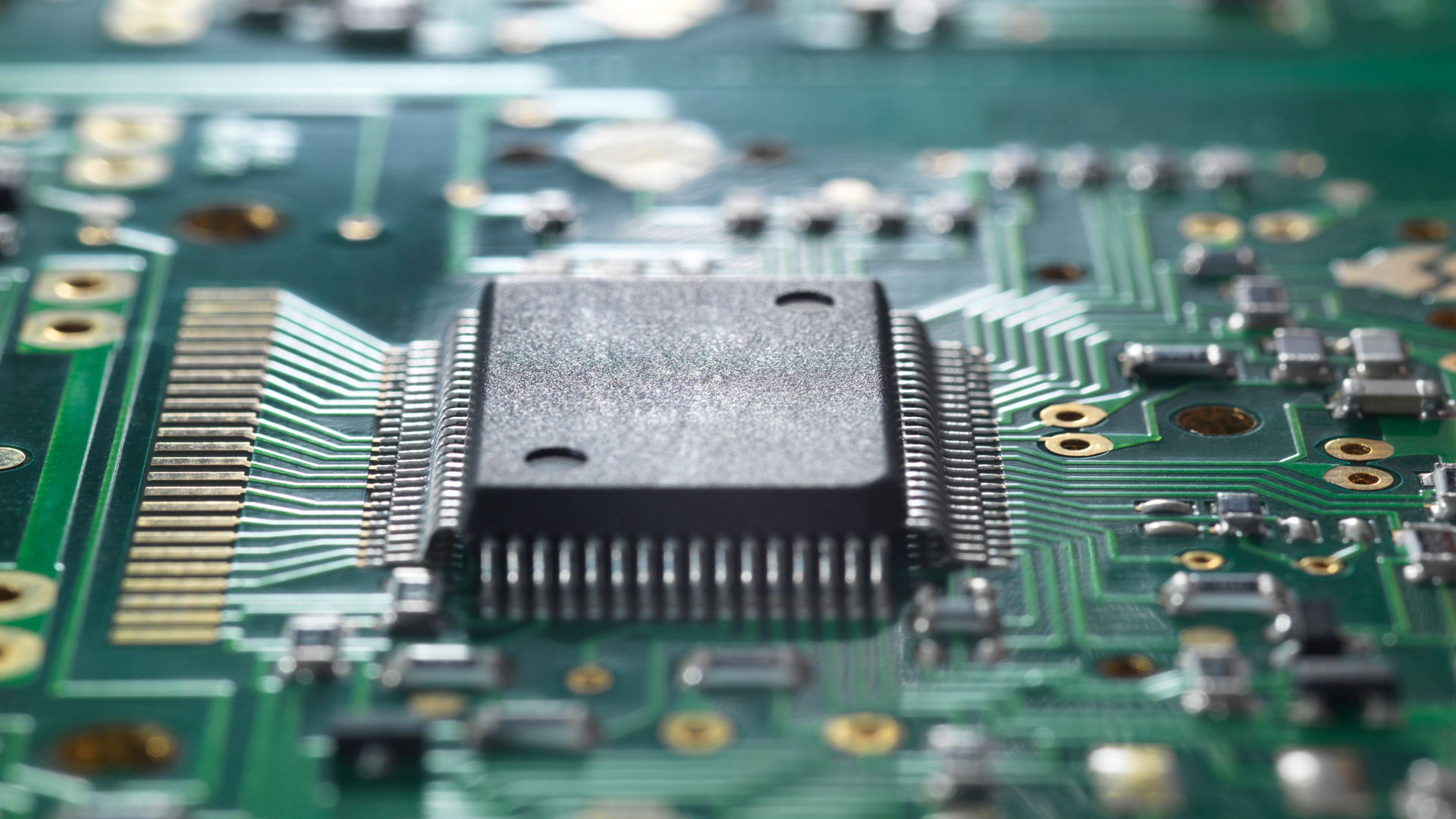

Created by three friends in the early 1990s, Nvidia focused on building computer chips that could process graphics for computer games. Back then, games were rudimentary, blocky like Lego, and virtually all two-dimensional. It takes a lot of calculations to show an image on a screen and then have it move according to both a game’s pathway and a user’s ability to control the action. In the 1990s, things had moved on since the first games that could only render a few pixels representing shapes like flying saucers or people on blank backdrops. Now much more intricate artwork could be displayed, and games seemed to become better and better at a ferocious clip into the new millennium.

In only 20 to 30 years, computer graphics have gone from side-scrolling Mario to the ultra-realistic CGI (computer-generated imagery) scenes that pepper action movies, like Dune and Napoleon. When you boil it down, these CGI effects are really just computer game graphics spliced into digital films. This rapid improvement in computer game graphics, from dots on a screen to digitally rendered characters who look and move exactly like real people, is a visualisation of the exponential increase in computing power. As computer chip design and production techniques advanced, the cost of computing slumped even as the power increased.

AI in parallel

Nvidia focused on making graphics processing units (GPUs) rather than centralised processing units (CPUs) because it believed that demand would soar while the computationally challenging nature of the work would help protect it from rival companies. When Nvidia was founded three-dimensional rendering was becoming more popular, both in gaming and in other areas of computing. Rendering that extra dimension dramatically increased the computing power needed to run these games and programs. GPUs were developed to deal with this graphics component, while the CPU could run the rest. To do this hefty work, GPUs were designed differently to CPUs. While CPUs could do sophisticated tasks, they did them one after another (‘serially’). GPUs on the other hand needed to do many small calculations at the same time (‘parallel processing’).

If you’ve read up on AI, you’ll know that much of it is predicated on ‘parallel processing’ – on being able to run millions of calculations simultaneously. Nvidia’s work on making ever smoother graphics led it to stumble onto helping drive what many people are calling the next technological leap of our species. Nvidia’s GPU chips are the best in the market for data centres – the huge warehouses of computers that lend the power to create ‘cloud computing’ and develop and run AI programs.

In the last few years, demand from data centres has galloped higher. Until the end of 2022, most of Nvidia’s sales came from computer game chips. Since then, data centres have dominated. In the most recent two quarters, data centre sales were ridiculously higher. Nvidia’s fourth-quarter (Q4) revenues were $22.1 billion (analysts had expected $20.4bn), up 265% on Q4 2023. Nvidia’s profits have skyrocketed as well, rising 770% from a year ago to $12.3bn. Nvidia’s share price soared more than 16% on the day it announced its Q4 results, taking the whole index higher with it. The stock has accounted for a quarter of the year-to-date gain of the S&P 500.

Lots of opportunities, lots of risks

This isn’t an exhortation for everyone to buy Nvidia. There are as many risks as opportunities for Nvidia right now. Firstly, there are many rivals who are rushing to develop their own chips, although they are starting very far behind Nvidia. Secondly, this sort of rampant growth heightens the chance that this AI gold rush turns into a bubble, with too many companies overinvesting in an exciting new technology. In 2022, Nvidia’s share price collapsed when regulators cracked down on Bitcoin (which was a big driver of demand for GPU chips) and it looked like appetite for chips could wane. In a couple of years, the world may be awash in way more data centres than it needs. That would lead to slashed investment and crumple Nvidia’s sales. Also, more than half of Nvidia’s sales go to Microsoft, Amazon, Alphabet and Meta. That’s a big concentration risk. Finally, there’s the risk of becoming obsolete – Nvidia discovered a fountain of money while digging away at something completely different. We should never underestimate how fast technology advances.

Perhaps the largest question about Nvidia’s future is whether the enormous purchases of data centre chips are a one-off wave of sales or a new deep pool of purchases that will recur for many years to come. If it is a wave, how high will it get and how long will it last? And if it’s recurring, what will be the life cycle of these new chips? No one knows their life cycle just yet, because the technology is still in its infancy, but big tech companies’ old-fashioned data centres generally have a useful life of six to eight years. Will the hardware of these new AI-focused data centres be the same? Or will they be more like mobiles, that get swapped out annually or biennially as the technology gets exponentially better each year?

All these variables make it hard to value Nvidia. At the moment, its price is roughly 30 times next year’s expected profits. That’s not excessively expensive in our view, yet it’s reliant on truly extraordinary profit growth: 86% for the coming year, following 581% growth last year. Other investors make the case that Nvidia will fall short. We will see.

I’m writing about Nvidia because it encapsulates the pace and violent shift toward AI that is happening in computing right now. It also explains the ascension of GPU technology that spurred its development and illustrates perfectly the blindfolded nature of technological invention. Since they were first created, computer games have been maligned and cursed by many as unhelpful, distracting pastimes. Wasteful and corrosive. Yet from them has sprung one of the greatest technological marvels of our age.